Table of Contents

This document describes the adversary model, design requirements, and implementation of the Tor Browser. It is current as of Tor Browser 2.3.25-5 and Torbutton 1.5.1.

This document is also meant to serve as a set of design requirements and to describe a reference implementation of a Private Browsing Mode that defends against active network adversaries, in addition to the passive forensic local adversary currently addressed by the major browsers.

The Tor Browser is based on Mozilla's Extended Support Release (ESR) Firefox branch. We have a series of patches against this browser to enhance privacy and security. Browser behavior is additionally augmented through the Torbutton extension, though we are in the process of moving this functionality into direct Firefox patches. We also change a number of Firefox preferences from their defaults.

To help protect against potential Tor Exit Node eavesdroppers, we include HTTPS-Everywhere. To provide users with optional defense-in-depth against Javascript and other potential exploit vectors, we also include NoScript. To protect against PDF-based Tor proxy bypass and to improve usability, we include the PDF.JS extension. We also modify several extension preferences from their defaults.

The Tor Browser Design Requirements are meant to describe the properties of a Private Browsing Mode that defends against both network and local forensic adversaries.

There are two main categories of requirements: Security Requirements, and Privacy Requirements. Security Requirements are the minimum properties in order for a browser to be able to support Tor and similar privacy proxies safely. Privacy requirements are the set of properties that cause us to prefer one browser over another.

While we will endorse the use of browsers that meet the security requirements, it is primarily the privacy requirements that cause us to maintain our own browser distribution.

The key words "MUST", "MUST NOT", "REQUIRED", "SHALL", "SHALL NOT", "SHOULD", "SHOULD NOT", "RECOMMENDED", "MAY", and "OPTIONAL" in this document are to be interpreted as described in RFC 2119.

The security requirements are primarily concerned with ensuring the safe use of Tor. Violations in these properties typically result in serious risk for the user in terms of immediate deanonymization and/or observability. With respect to browser support, security requirements are the minimum properties in order for Tor to support the use of a particular browser.

- Proxy

Obedience

The browser MUST NOT bypass Tor proxy settings for any content.

- State

Separation

The browser MUST NOT provide the content window with any state from any other browsers or any non-Tor browsing modes. This includes shared state from independent plugins, and shared state from Operating System implementations of TLS and other support libraries.

- Disk

Avoidance

The browser MUST NOT write any information that is derived from or that reveals browsing activity to the disk, or store it in memory beyond the duration of one browsing session, unless the user has explicitly opted to store their browsing history information to disk.

- Application Data

Isolation

The components involved in providing private browsing MUST be self-contained, or MUST provide a mechanism for rapid, complete removal of all evidence of the use of the mode. In other words, the browser MUST NOT write or cause the operating system to write any information about the use of private browsing to disk outside of the application's control. The user must be able to ensure that secure deletion of the software is sufficient to remove evidence of the use of the software. All exceptions and shortcomings due to operating system behavior MUST be wiped by an uninstaller. However, due to permissions issues with access to swap, implementations MAY choose to leave it out of scope, and/or leave it to the Operating System/platform to implement ephemeral-keyed encrypted swap.

The privacy requirements are primarily concerned with reducing linkability: the ability for a user's activity on one site to be linked with their activity on another site without their knowledge or explicit consent. With respect to browser support, privacy requirements are the set of properties that cause us to prefer one browser over another.

For the purposes of the unlinkability requirements of this section as well as the descriptions in the implementation section, a url bar origin means at least the second-level DNS name. For example, for mail.google.com, the origin would be google.com. Implementations MAY, at their option, restrict the url bar origin to be the entire fully qualified domain name.

- Cross-Origin

Identifier Unlinkability

User activity on one url bar origin MUST NOT be linkable to their activity in any other url bar origin by any third party automatically or without user interaction or approval. This requirement specifically applies to linkability from stored browser identifiers, authentication tokens, and shared state. The requirement does not apply to linkable information the user manually submits to sites, or due to information submitted during manual link traversal. This functionality SHOULD NOT interfere with interactive, click-driven federated login in a substantial way.

- Cross-Origin

Fingerprinting Unlinkability

User activity on one url bar origin MUST NOT be linkable to their activity in any other url bar origin by any third party. This property specifically applies to linkability from fingerprinting browser behavior.

- Long-Term

Unlinkability

The browser MUST provide an obvious, easy way for the user to remove all of its authentication tokens and browser state and obtain a fresh identity. Additionally, the browser SHOULD clear linkable state by default automatically upon browser restart, except at user option.

In addition to the above design requirements, the technology decisions about Tor Browser are also guided by some philosophical positions about technology.

- Preserve existing user model

The existing way that the user expects to use a browser must be preserved. If the user has to maintain a different mental model of how the sites they are using behave depending on tab, browser state, or anything else that would not normally be what they experience in their default browser, the user will inevitably be confused. They will make mistakes and reduce their privacy as a result. Worse, they may just stop using the browser, assuming it is broken.

User model breakage was one of the failures of Torbutton: Even if users managed to install everything properly, the toggle model was too hard for the average user to understand, especially in the face of accumulating tabs from multiple states crossed with the current Tor-state of the browser.

- Favor the implementation mechanism least likely to

break sites

In general, we try to find solutions to privacy issues that will not induce site breakage, though this is not always possible.

- Plugins must be restricted

Even if plugins always properly used the browser proxy settings (which none of them do) and could not be induced to bypass them (which all of them can), the activities of closed-source plugins are very difficult to audit and control. They can obtain and transmit all manner of system information to websites, often have their own identifier storage for tracking users, and also contribute to fingerprinting.

Therefore, if plugins are to be enabled in private browsing modes, they must be restricted from running automatically on every page (via click-to-play placeholders), and/or be sandboxed to restrict the types of system calls they can execute. If the user agent allows the user to craft an exemption to allow a plugin to be used automatically, it must only apply to the top level url bar domain, and not to all sites, to reduce cross-origin fingerprinting linkability.

- Minimize Global Privacy Options

Another failure of Torbutton was the options panel. Each option that detectably alters browser behavior can be used as a fingerprinting tool. Similarly, all extensions should be disabled in the mode except as an opt-in basis. We should not load system-wide and/or Operating System provided addons or plugins.

Instead of global browser privacy options, privacy decisions should be made per url bar origin to eliminate the possibility of linkability between domains. For example, when a plugin object (or a Javascript access of window.plugins) is present in a page, the user should be given the choice of allowing that plugin object for that url bar origin only. The same goes for exemptions to third party cookie policy, geo-location, and any other privacy permissions.

If the user has indicated they wish to record local history storage, these permissions can be written to disk. Otherwise, they should remain memory-only.

- No filters

Site-specific or filter-based addons such as AdBlock Plus, Request Policy, Ghostery, Priv3, and Sharemenot are to be avoided. We believe that these addons do not add any real privacy to a proper implementation of the above privacy requirements, and that development efforts should be focused on general solutions that prevent tracking by all third parties, rather than a list of specific URLs or hosts.

Filter-based addons can also introduce strange breakage and cause usability nightmares, and will also fail to do their job if an adversary simply registers a new domain or creates a new url path. Worse still, the unique filter sets that each user creates or installs will provide a wealth of fingerprinting targets.

As a general matter, we are also generally opposed to shipping an always-on Ad blocker with Tor Browser. We feel that this would damage our credibility in terms of demonstrating that we are providing privacy through a sound design alone, as well as damage the acceptance of Tor users by sites that support themselves through advertising revenue.

Users are free to install these addons if they wish, but doing so is not recommended, as it will alter the browser request fingerprint.

- Stay Current

We believe that if we do not stay current with the support of new web technologies, we cannot hope to substantially influence or be involved in their proper deployment or privacy realization. However, we will likely disable high-risk features pending analysis, audit, and mitigation.

A Tor web browser adversary has a number of goals, capabilities, and attack types that can be used to illustrate the design requirements for the Tor Browser. Let's start with the goals.

- Bypassing proxy settings

The adversary's primary goal is direct compromise and bypass of Tor, causing the user to directly connect to an IP of the adversary's choosing.

- Correlation of Tor vs Non-Tor Activity

If direct proxy bypass is not possible, the adversary will likely happily settle for the ability to correlate something a user did via Tor with their non-Tor activity. This can be done with cookies, cache identifiers, javascript events, and even CSS. Sometimes the fact that a user uses Tor may be enough for some authorities.

- History disclosure

The adversary may also be interested in history disclosure: the ability to query a user's history to see if they have issued certain censored search queries, or visited censored sites.

- Correlate activity across multiple sites

The primary goal of the advertising networks is to know that the user who visited siteX.com is the same user that visited siteY.com to serve them targeted ads. The advertising networks become our adversary insofar as they attempt to perform this correlation without the user's explicit consent.

- Fingerprinting/anonymity set reduction

Fingerprinting (more generally: "anonymity set reduction") is used to attempt to gather identifying information on a particular individual without the use of tracking identifiers. If the dissident or whistleblower's timezone is available, and they are using a rare build of Firefox for an obscure operating system, and they have a specific display resolution only used on one type of laptop, this can be very useful information for tracking them down, or at least tracking their activities.

- History records and other on-disk

information

In some cases, the adversary may opt for a heavy-handed approach, such as seizing the computers of all Tor users in an area (especially after narrowing the field by the above two pieces of information). History records and cache data are the primary goals here.

The adversary can position themselves at a number of different locations in order to execute their attacks.

- Exit Node or Upstream Router

The adversary can run exit nodes, or alternatively, they may control routers upstream of exit nodes. Both of these scenarios have been observed in the wild.

- Ad servers and/or Malicious Websites

The adversary can also run websites, or more likely, they can contract out ad space from a number of different ad servers and inject content that way. For some users, the adversary may be the ad servers themselves. It is not inconceivable that ad servers may try to subvert or reduce a user's anonymity through Tor for marketing purposes.

- Local Network/ISP/Upstream Router

The adversary can also inject malicious content at the user's upstream router when they have Tor disabled, in an attempt to correlate their Tor and Non-Tor activity.

Additionally, at this position the adversary can block Tor, or attempt to recognize the traffic patterns of specific web pages at the entrance to the Tor network.

- Physical Access

Some users face adversaries with intermittent or constant physical access. Users in Internet cafes, for example, face such a threat. In addition, in countries where simply using tools like Tor is illegal, users may face confiscation of their computer equipment for excessive Tor usage or just general suspicion.

The adversary can perform the following attacks from a number of different positions to accomplish various aspects of their goals. It should be noted that many of these attacks (especially those involving IP address leakage) are often performed by accident by websites that simply have Javascript, dynamic CSS elements, and plugins. Others are performed by ad servers seeking to correlate users' activity across different IP addresses, and still others are performed by malicious agents on the Tor network and at national firewalls.

- Read and insert identifiers

The browser contains multiple facilities for storing identifiers that the adversary creates for the purposes of tracking users. These identifiers are most obviously cookies, but also include HTTP auth, DOM storage, cached scripts and other elements with embedded identifiers, client certificates, and even TLS Session IDs.

An adversary in a position to perform MITM content alteration can inject document content elements to both read and inject cookies for arbitrary domains. In fact, even many "SSL secured" websites are vulnerable to this sort of active sidejacking. In addition, the ad networks of course perform tracking with cookies as well.

These types of attacks are attempts at subverting our Cross-Origin Identifier Unlinkability and Long-Term Unlikability design requirements.

- Fingerprint users based on browser

attributes

There is an absurd amount of information available to websites via attributes of the browser. This information can be used to reduce anonymity set, or even uniquely fingerprint individual users. Attacks of this nature are typically aimed at tracking users across sites without their consent, in an attempt to subvert our Cross-Origin Fingerprinting Unlinkability and Long-Term Unlikability design requirements.

Fingerprinting is an intimidating problem to attempt to tackle, especially without a metric to determine or at least intuitively understand and estimate which features will most contribute to linkability between visits.

The Panopticlick study done by the EFF uses the Shannon entropy - the number of identifying bits of information encoded in browser properties - as this metric. Their result data is definitely useful, and the metric is probably the appropriate one for determining how identifying a particular browser property is. However, some quirks of their study means that they do not extract as much information as they could from display information: they only use desktop resolution and do not attempt to infer the size of toolbars. In the other direction, they may be over-counting in some areas, as they did not compute joint entropy over multiple attributes that may exhibit a high degree of correlation. Also, new browser features are added regularly, so the data should not be taken as final.

Despite the uncertainty, all fingerprinting attacks leverage the following attack vectors:

- Observing Request Behavior

Properties of the user's request behavior comprise the bulk of low-hanging fingerprinting targets. These include: User agent, Accept-* headers, pipeline usage, and request ordering. Additionally, the use of custom filters such as AdBlock and other privacy filters can be used to fingerprint request patterns (as an extreme example).

- Inserting Javascript

Javascript can reveal a lot of fingerprinting information. It provides DOM objects such as window.screen and window.navigator to extract information about the useragent. Also, Javascript can be used to query the user's timezone via the

Date()object, WebGL can reveal information about the video card in use, and high precision timing information can be used to fingerprint the CPU and interpreter speed. In the future, new JavaScript features such as Resource Timing may leak an unknown amount of network timing related information. - Inserting Plugins

The Panopticlick project found that the mere list of installed plugins (in navigator.plugins) was sufficient to provide a large degree of fingerprintability. Additionally, plugins are capable of extracting font lists, interface addresses, and other machine information that is beyond what the browser would normally provide to content. In addition, plugins can be used to store unique identifiers that are more difficult to clear than standard cookies. Flash-based cookies fall into this category, but there are likely numerous other examples. Beyond fingerprinting, plugins are also abysmal at obeying the proxy settings of the browser.

- Inserting CSS

CSS media queries can be inserted to gather information about the desktop size, widget size, display type, DPI, user agent type, and other information that was formerly available only to Javascript.

- Observing Request Behavior

- Website traffic fingerprinting

Website traffic fingerprinting is an attempt by the adversary to recognize the encrypted traffic patterns of specific websites. In the case of Tor, this attack would take place between the user and the Guard node, or at the Guard node itself.

The most comprehensive study of the statistical properties of this attack against Tor was done by Panchenko et al. Unfortunately, the publication bias in academia has encouraged the production of a number of follow-on attack papers claiming "improved" success rates, in some cases even claiming to completely invalidate any attempt at defense. These "improvements" are actually enabled primarily by taking a number of shortcuts (such as classifying only very small numbers of web pages, neglecting to publish ROC curves or at least false positive rates, and/or omitting the effects of dataset size on their results). Despite these subsequent "improvements", we are skeptical of the efficacy of this attack in a real world scenario, especially in the face of any defenses.

In general, with machine learning, as you increase the number and/or complexity of categories to classify while maintaining a limit on reliable feature information you can extract, you eventually run out of descriptive feature information, and either true positive accuracy goes down or the false positive rate goes up. This error is called the bias in your hypothesis space. In fact, even for unbiased hypothesis spaces, the number of training examples required to achieve a reasonable error bound is a function of the complexity of the categories you need to classify.

In the case of this attack, the key factors that increase the classification complexity (and thus hinder a real world adversary who attempts this attack) are large numbers of dynamically generated pages, partially cached content, and also the non-web activity of entire Tor network. This yields an effective number of "web pages" many orders of magnitude larger than even Panchenko's "Open World" scenario, which suffered continous near-constant decline in the true positive rate as the "Open World" size grew (see figure 4). This large level of classification complexity is further confounded by a noisy and low resolution featureset - one which is also relatively easy for the defender to manipulate at low cost.

To make matters worse for a real-world adversary, the ocean of Tor Internet activity (at least, when compared to a lab setting) makes it a certainty that an adversary attempting examine large amounts of Tor traffic will ultimately be overwhelmed by false positives (even after making heavy tradeoffs on the ROC curve to minimize false positives to below 0.01%). This problem is known in the IDS literature as the Base Rate Fallacy, and it is the primary reason that anomaly and activity classification-based IDS and antivirus systems have failed to materialize in the marketplace (despite early success in academic literature).

Still, we do not believe that these issues are enough to dismiss the attack outright. But we do believe these factors make it both worthwhile and effective to deploy light-weight defenses that reduce the accuracy of this attack by further contributing noise to hinder successful feature extraction.

- Remotely or locally exploit browser and/or

OS

Last, but definitely not least, the adversary can exploit either general browser vulnerabilities, plugin vulnerabilities, or OS vulnerabilities to install malware and surveillance software. An adversary with physical access can perform similar actions.

For the purposes of the browser itself, we limit the scope of this adversary to one that has passive forensic access to the disk after browsing activity has taken place. This adversary motivates our Disk Avoidance defenses.

An adversary with arbitrary code execution typically has more power, though. It can be quite hard to really significantly limit the capabilities of such an adversary. The Tails system can provide some defense against this adversary through the use of readonly media and frequent reboots, but even this can be circumvented on machines without Secure Boot through the use of BIOS rootkits.

The Implementation section is divided into subsections, each of which corresponds to a Design Requirement. Each subsection is divided into specific web technologies or properties. The implementation is then described for that property.

In some cases, the implementation meets the design requirements in a non-ideal way (for example, by disabling features). In rare cases, there may be no implementation at all. Both of these cases are denoted by differentiating between the Design Goal and the Implementation Status for each property. Corresponding bugs in the Tor bug tracker are typically linked for these cases.

Proxy obedience is assured through the following:

- Firefox proxy settings, patches, and build flags

Our Firefox preferences file sets the Firefox proxy settings to use Tor directly as a SOCKS proxy. It sets network.proxy.socks_remote_dns, network.proxy.socks_version, network.proxy.socks_port, and network.dns.disablePrefetch.

We also patch Firefox in order to prevent a DNS leak due to a WebSocket rate-limiting check. As stated in the patch, we believe the direct DNS resolution performed by this check is in violation of the W3C standard, but this DNS proxy leak remains present in stock Firefox releases.

During the transition to Firefox 17-ESR, a code audit was undertaken to verify that there were no system calls or XPCOM activity in the source tree that did not use the browser proxy settings. The only violation we found was that WebRTC was capable of creating UDP sockets and was compiled in by default. We subsequently disabled it using the Firefox build option --disable-webrtc.

We have verified that these settings and patches properly proxy HTTPS, OCSP, HTTP, FTP, gopher (now defunct), DNS, SafeBrowsing Queries, all javascript activity, including HTML5 audio and video objects, addon updates, wifi geolocation queries, searchbox queries, XPCOM addon HTTPS/HTTP activity, WebSockets, and live bookmark updates. We have also verified that IPv6 connections are not attempted, through the proxy or otherwise (Tor does not yet support IPv6). We have also verified that external protocol helpers, such as smb urls and other custom protocol handlers are all blocked.

Numerous other third parties have also reviewed and tested the proxy settings and have provided test cases based on their work. See in particular decloak.net.

- Disabling plugins

Plugins have the ability to make arbitrary OS system calls and bypass proxy settings. This includes the ability to make UDP sockets and send arbitrary data independent of the browser proxy settings.

Torbutton disables plugins by using the @mozilla.org/plugin/host;1 service to mark the plugin tags as disabled. This block can be undone through both the Torbutton Security UI, and the Firefox Plugin Preferences.

If the user does enable plugins in this way, plugin-handled objects are still restricted from automatic load through Firefox's click-to-play preference plugins.click_to_play.

In addition, to reduce any unproxied activity by arbitrary plugins at load time, and to reduce the fingerprintability of the installed plugin list, we also patch the Firefox source code to prevent the load of any plugins except for Flash and Gnash.

- External App Blocking and Drag Event Filtering

External apps can be induced to load files that perform network activity. Unfortunately, there are cases where such apps can be launched automatically with little to no user input. In order to prevent this, Torbutton installs a component to provide the user with a popup whenever the browser attempts to launch a helper app.

Additionally, modern desktops now pre-emptively fetch any URLs in Drag and Drop events as soon as the drag is initiated. This download happens independent of the browser's Tor settings, and can be triggered by something as simple as holding the mouse button down for slightly too long while clicking on an image link. We had to patch Firefox to emit an observer event during dragging to allow us to filter the drag events from Torbutton before the OS downloads the URLs the events contained.

- Disabling system extensions and clearing the addon whitelist

Firefox addons can perform arbitrary activity on your computer, including bypassing Tor. It is for this reason we disable the addon whitelist (xpinstall.whitelist.add), so that users are prompted before installing addons regardless of the source. We also exclude system-level addons from the browser through the use of extensions.enabledScopes and extensions.autoDisableScopes.

Tor Browser State is separated from existing browser state through use of a custom Firefox profile, and by setting the $HOME environment variable to the root of the bundle's directory. The browser also does not load any system-wide extensions (through the use of extensions.enabledScopes and extensions.autoDisableScopes. Furthermore, plugins are disabled, which prevents Flash cookies from leaking from a pre-existing Flash directory.

The User Agent MUST (at user option) prevent all disk records of browser activity. The user should be able to optionally enable URL history and other history features if they so desire.

We achieve this goal through several mechanisms. First, we set the Firefox Private Browsing preference browser.privatebrowsing.autostart. In addition, four Firefox patches are needed to prevent disk writes, even if Private Browsing Mode is enabled. We need to prevent the permissions manager from recording HTTPS STS state, prevent intermediate SSL certificates from being recorded, prevent download history from being recorded, and prevent the content preferences service from recording site zoom. For more details on these patches, see the Firefox Patches section.

As an additional defense-in-depth measure, we set the following preferences: , browser.cache.disk.enable, browser.cache.offline.enable, dom.indexedDB.enabled, network.cookie.lifetimePolicy, signon.rememberSignons, browser.formfill.enable, browser.download.manager.retention, browser.sessionstore.privacy_level, and network.cookie.lifetimePolicy. Many of these preferences are likely redundant with browser.privatebrowsing.autostart, but we have not done the auditing work to ensure that yet.

Torbutton also contains code to prevent the Firefox session store from writing to disk.

For more details on disk leak bugs and enhancements, see the tbb-disk-leak tag in our bugtracker

Tor Browser Bundle MUST NOT cause any information to be written outside of the bundle directory. This is to ensure that the user is able to completely and safely remove the bundle without leaving other traces of Tor usage on their computer.

To ensure TBB directory isolation, we set browser.download.useDownloadDir, browser.shell.checkDefaultBrowser, and browser.download.manager.addToRecentDocs. We also set the $HOME environment variable to be the TBB extraction directory.

The Tor Browser MUST prevent a user's activity on one site from being linked to their activity on another site. When this goal cannot yet be met with an existing web technology, that technology or functionality is disabled. Our design goal is to ultimately eliminate the need to disable arbitrary technologies, and instead simply alter them in ways that allows them to function in a backwards-compatible way while avoiding linkability. Users should be able to use federated login of various kinds to explicitly inform sites who they are, but that information should not transparently allow a third party to record their activity from site to site without their prior consent.

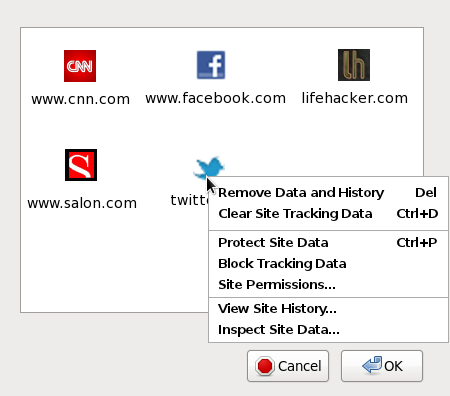

The benefit of this approach comes not only in the form of reduced linkability, but also in terms of simplified privacy UI. If all stored browser state and permissions become associated with the url bar origin, the six or seven different pieces of privacy UI governing these identifiers and permissions can become just one piece of UI. For instance, a window that lists the url bar origin for which browser state exists, possibly with a context-menu option to drill down into specific types of state or permissions. An example of this simplification can be seen in Figure 1.

Figure 1. Improving the Privacy UI

- Cookies

Design Goal: All cookies MUST be double-keyed to the url bar origin and third-party origin. There exists a Mozilla bug that contains a prototype patch, but it lacks UI, and does not apply to modern Firefoxes.

Implementation Status: As a stopgap to satisfy our design requirement of unlinkability, we currently entirely disable 3rd party cookies by setting network.cookie.cookieBehavior to 1. We would prefer that third party content continue to function, but we believe the requirement for unlinkability trumps that desire.

- Cache

Cache is isolated to the url bar origin by using a technique pioneered by Colin Jackson et al, via their work on SafeCache. The technique re-uses the nsICachingChannel.cacheKey attribute that Firefox uses internally to prevent improper caching and reuse of HTTP POST data.

However, to increase the security of the isolation and to solve conflicts with OCSP relying the cacheKey property for reuse of POST requests, we had to patch Firefox to provide a cacheDomain cache attribute. We use the fully qualified url bar domain as input to this field, to avoid the complexities of heuristically determining the second-level DNS name.

Furthermore, we chose a different isolation scheme than the Stanford implementation. First, we decoupled the cache isolation from the third party cookie attribute. Second, we use several mechanisms to attempt to determine the actual location attribute of the top-level window (to obtain the url bar FQDN) used to load the page, as opposed to relying solely on the Referer property.

Therefore, the original Stanford test cases are expected to fail. Functionality can still be verified by navigating to about:cache and viewing the key used for each cache entry. Each third party element should have an additional "domain=string" property prepended, which will list the FQDN that was used to source the third party element.

Additionally, because the image cache is a separate entity from the content cache, we had to patch Firefox to also isolate this cache per url bar domain.

- HTTP Auth

HTTP authentication tokens are removed for third party elements using the http-on-modify-request observer to remove the Authorization headers to prevent silent linkability between domains.

- DOM Storage

DOM storage for third party domains MUST be isolated to the url bar origin, to prevent linkability between sites. This functionality is provided through a patch to Firefox.

- Flash cookies

Design Goal: Users should be able to click-to-play flash objects from trusted sites. To make this behavior unlinkable, we wish to include a settings file for all platforms that disables flash cookies using the Flash settings manager.

Implementation Status: We are currently having difficulties causing Flash player to use this settings file on Windows, so Flash remains difficult to enable.

- SSL+TLS session resumption, HTTP Keep-Alive and SPDY

Design Goal: TLS session resumption tickets and SSL Session IDs MUST be limited to the url bar origin. HTTP Keep-Alive connections from a third party in one url bar origin MUST NOT be reused for that same third party in another url bar origin.

Implementation Status: We currently clear SSL Session IDs upon New Identity, we disable TLS Session Tickets via the Firefox Pref security.enable_tls_session_tickets. We disable SSL Session IDs via a patch to Firefox. To compensate for the increased round trip latency from disabling these performance optimizations, we also enable TLS False Start via the Firefox Pref security.ssl.enable_false_start.

Because of the extreme performance benefits of HTTP Keep-Alive for interactive web apps, and because of the difficulties of conveying urlbar origin information down into the Firefox HTTP layer, as a compromise we currently merely reduce the HTTP Keep-Alive timeout to 20 seconds (which is measured from the last packet read on the connection) using the Firefox preference network.http.keep-alive.timeout.

However, because SPDY can store identifiers and has extremely long keepalive duration, it is disabled through the Firefox preference network.http.spdy.enabled.

- Automated cross-origin redirects MUST NOT store identifiers

Design Goal: To prevent attacks aimed at subverting the Cross-Origin Identifier Unlinkability privacy requirement, the browser MUST NOT store any identifiers (cookies, cache, DOM storage, HTTP auth, etc) for cross-origin redirect intermediaries that do not prompt for user input. For example, if a user clicks on a bit.ly url that redirects to a doubleclick.net url that finally redirects to a cnn.com url, only cookies from cnn.com should be retained after the redirect chain completes.

Non-automated redirect chains that require user input at some step (such as federated login systems) SHOULD still allow identifiers to persist.

Implementation status: There are numerous ways for the user to be redirected, and the Firefox API support to detect each of them is poor. We have a trac bug open to implement what we can.

- window.name

window.name is a magical DOM property that for some reason is allowed to retain a persistent value for the lifespan of a browser tab. It is possible to utilize this property for identifier storage.

In order to eliminate non-consensual linkability but still allow for sites that utilize this property to function, we reset the window.name property of tabs in Torbutton every time we encounter a blank Referer. This behavior allows window.name to persist for the duration of a click-driven navigation session, but as soon as the user enters a new URL or navigates between https/http schemes, the property is cleared.

- Auto form-fill

We disable the password saving functionality in the browser as part of our Disk Avoidance requirement. However, since users may decide to re-enable disk history records and password saving, we also set the signon.autofillForms preference to false to prevent saved values from immediately populating fields upon page load. Since Javascript can read these values as soon as they appear, setting this preference prevents automatic linkability from stored passwords.

- HSTS supercookies

An extreme (but not impossible) attack to mount is the creation of HSTS supercookies. Since HSTS effectively stores one bit of information per domain name, an adversary in possession of numerous domains can use them to construct cookies based on stored HSTS state.

Design Goal: There appears to be three options for us: 1. Disable HSTS entirely, and rely instead on HTTPS-Everywhere to crawl and ship rules for HSTS sites. 2. Restrict the number of HSTS-enabled third parties allowed per url bar origin. 3. Prevent third parties from storing HSTS rules. We have not yet decided upon the best approach.

Implementation Status: Currently, HSTS state is cleared by New Identity, but we don't defend against the creation of these cookies between New Identity invocations.

- Exit node usage

Design Goal: Every distinct navigation session (as defined by a non-blank Referer header) MUST exit through a fresh Tor circuit in Tor Browser to prevent exit node observers from linking concurrent browsing activity.

Implementation Status: The Tor feature that supports this ability only exists in the 0.2.3.x-alpha series. Ticket #3455 is the Torbutton ticket to make use of the new Tor functionality.

For more details on identifier linkability bugs and enhancements, see the tbb-linkability tag in our bugtracker

In order to properly address the fingerprinting adversary on a technical level, we need a metric to measure linkability of the various browser properties beyond any stored origin-related state. The Panopticlick Project by the EFF provides us with a prototype of such a metric. The researchers conducted a survey of volunteers who were asked to visit an experiment page that harvested many of the above components. They then computed the Shannon Entropy of the resulting distribution of each of several key attributes to determine how many bits of identifying information each attribute provided.

Many browser features have been added since the EFF first ran their experiment and collected their data. To avoid an infinite sinkhole, we reduce the efforts for fingerprinting resistance by only concerning ourselves with reducing the fingerprintable differences among Tor Browser users. We do not believe it is possible to solve cross-browser fingerprinting issues.

Unfortunately, the unsolvable nature of the cross-browser fingerprinting problem means that the Panopticlick test website itself is not useful for evaluating the actual effectiveness of our defenses, or the fingerprinting defenses of any other web browser. Because the Panopticlick dataset is based on browser data spanning a number of widely deployed browsers over a number of years, any fingerprinting defenses attempted by browsers today are very likely to cause Panopticlick to report an increase in fingerprintability and entropy, because those defenses will stand out in sharp contrast to historical data. We have been working to convince the EFF that it is worthwhile to release the source code to Panopticlick to allow us to run our own version for this reason.

- Plugins

Plugins add to fingerprinting risk via two main vectors: their mere presence in window.navigator.plugins, as well as their internal functionality.

Design Goal: All plugins that have not been specifically audited or sandboxed MUST be disabled. To reduce linkability potential, even sandboxed plugins should not be allowed to load objects until the user has clicked through a click-to-play barrier. Additionally, version information should be reduced or obfuscated until the plugin object is loaded. For flash, we wish to provide a settings.sol file to disable Flash cookies, and to restrict P2P features that are likely to bypass proxy settings.

Implementation Status: Currently, we entirely disable all plugins in Tor Browser. However, as a compromise due to the popularity of Flash, we allow users to re-enable Flash, and flash objects are blocked behind a click-to-play barrier that is available only after the user has specifically enabled plugins. Flash is the only plugin available, the rest are entirely blocked from loading by a Firefox patch. We also set the Firefox preference plugin.expose_full_path to false, to avoid leaking plugin installation information.

- HTML5 Canvas Image Extraction

The HTML5 Canvas is a feature that has been added to major browsers after the EFF developed their Panopticlick study. After plugins and plugin-provided information, we believe that the HTML5 Canvas is the single largest fingerprinting threat browsers face today. Initial studies show that the Canvas can provide an easy-access fingerprinting target: The adversary simply renders WebGL, font, and named color data to a Canvas element, extracts the image buffer, and computes a hash of that image data. Subtle differences in the video card, font packs, and even font and graphics library versions allow the adversary to produce a stable, simple, high-entropy fingerprint of a computer. In fact, the hash of the rendered image can be used almost identically to a tracking cookie by the web server.

To reduce the threat from this vector, we have patched Firefox to prompt before returning valid image data to the Canvas APIs. If the user hasn't previously allowed the site in the URL bar to access Canvas image data, pure white image data is returned to the Javascript APIs.

- WebGL

WebGL is fingerprintable both through information that is exposed about the underlying driver and optimizations, as well as through performance fingerprinting.

Because of the large amount of potential fingerprinting vectors and the previously unexposed vulnerability surface, we deploy a similar strategy against WebGL as for plugins. First, WebGL Canvases have click-to-play placeholders (provided by NoScript), and do not run until authorized by the user. Second, we obfuscate driver information by setting the Firefox preferences webgl.disable-extensions and webgl.min_capability_mode, which reduce the information provided by the following WebGL API calls: getParameter(), getSupportedExtensions(), and getExtension().

- Fonts

According to the Panopticlick study, fonts provide the most linkability when they are provided as an enumerable list in filesystem order, via either the Flash or Java plugins. However, it is still possible to use CSS and/or Javascript to query for the existence of specific fonts. With a large enough pre-built list to query, a large amount of fingerprintable information may still be available.

The sure-fire way to address font linkability is to ship the browser with a font for every language, typeface, and style in use in the world, and to only use those fonts at the exclusion of system fonts. However, this set may be impractically large. It is possible that a smaller common subset may be found that provides total coverage. However, we believe that with strong url bar origin identifier isolation, a simpler approach can reduce the number of bits available to the adversary while avoiding the rendering and language issues of supporting a global font set.

Implementation Status: We disable plugins, which prevents font enumeration. Additionally, we limit both the number of font queries from CSS, as well as the total number of fonts that can be used in a document with a Firefox patch. We create two prefs, browser.display.max_font_attempts and browser.display.max_font_count for this purpose. Once these limits are reached, the browser behaves as if browser.display.use_document_fonts was set. We are still working to determine optimal values for these prefs.

To improve rendering, we exempt remote @font-face fonts from these counts, and if a font-family CSS rule lists a remote font (in any order), we use that font instead of any of the named local fonts.

- Desktop resolution, CSS Media Queries, and System Colors

Both CSS and Javascript have access to a lot of information about the screen resolution, usable desktop size, OS widget size, toolbar size, title bar size, system theme colors, and other desktop features that are not at all relevant to rendering and serve only to provide information for fingerprinting.

Design Goal: Our design goal here is to reduce the resolution information down to the bare minimum required for properly rendering inside a content window. We intend to report all rendering information correctly with respect to the size and properties of the content window, but report an effective size of 0 for all border material, and also report that the desktop is only as big as the inner content window. Additionally, new browser windows are sized such that their content windows are one of a few fixed sizes based on the user's desktop resolution.

Implementation Status: We have implemented the above strategy using a window observer to resize new windows based on desktop resolution. Additionally, we patch Firefox to use the client content window size for window.screen and for CSS Media Queries. Similarly, we patch DOM events to return content window relative points. We also patch Firefox to report a fixed set of system colors to content window CSS.

To further reduce resolution-based fingerprinting, we are investigating zoom/viewport-based mechanisms that might allow us to always report the same desktop resolution regardless of the actual size of the content window, and simply scale to make up the difference. However, the complexity and rendering impact of such a change is not yet known.

- User Agent and HTTP Headers

Design Goal: All Tor Browser users MUST provide websites with an identical user agent and HTTP header set for a given request type. We omit the Firefox minor revision, and report a popular Windows platform. If the software is kept up to date, these headers should remain identical across the population even when updated.

Implementation Status: Firefox provides several options for controlling the browser user agent string which we leverage. We also set similar prefs for controlling the Accept-Language and Accept-Charset headers, which we spoof to English by default. Additionally, we remove content script access to Components.interfaces, which can be used to fingerprint OS, platform, and Firefox minor version.

- Timezone and clock offset

Design Goal: All Tor Browser users MUST report the same timezone to websites. Currently, we choose UTC for this purpose, although an equally valid argument could be made for EDT/EST due to the large English-speaking population density (coupled with the fact that we spoof a US English user agent). Additionally, the Tor software should detect if the users clock is significantly divergent from the clocks of the relays that it connects to, and use this to reset the clock values used in Tor Browser to something reasonably accurate.

Implementation Status: We set the timezone using the TZ environment variable, which is supported on all platforms. Additionally, we plan to obtain a clock offset from Tor, but this won't be available until Tor 0.2.3.x is in use.

- Javascript performance fingerprinting

Javascript performance fingerprinting is the act of profiling the performance of various Javascript functions for the purpose of fingerprinting the Javascript engine and the CPU.

Design Goal: We have several potential mitigation approaches to reduce the accuracy of performance fingerprinting without risking too much damage to functionality. Our current favorite is to reduce the resolution of the Event.timeStamp and the Javascript Date() object, while also introducing jitter. Our goal is to increase the amount of time it takes to mount a successful attack. Mowery et al found that even with the default precision in most browsers, they required up to 120 seconds of amortization and repeated trials to get stable results from their feature set. We intend to work with the research community to establish the optimum trade-off between quantization+jitter and amortization time.

Implementation Status: Currently, the only mitigation against performance fingerprinting is to disable Navigation Timing through the Firefox preference dom.enable_performance.

- Non-Uniform HTML5 API Implementations

At least two HTML5 features have different implementation status across the major OS vendors: the Battery API and the Network Connection API. We disable these APIs through the Firefox preferences dom.battery.enabled and dom.network.enabled.

- Keystroke fingerprinting

Keystroke fingerprinting is the act of measuring key strike time and key flight time. It is seeing increasing use as a biometric.

Design Goal: We intend to rely on the same mechanisms for defeating Javascript performance fingerprinting: timestamp quantization and jitter.

Implementation Status: We have no implementation as of yet.

For more details on identifier linkability bugs and enhancements, see the tbb-fingerprinting tag in our bugtracker

In order to avoid long-term linkability, we provide a "New Identity" context menu option in Torbutton. This context menu option is active if Torbutton can read the environment variables $TOR_CONTROL_PASSWD and $TOR_CONTROL_PORT.

First, Torbutton disables Javascript in all open tabs and windows by using both the browser.docShell.allowJavascript attribute as well as nsIDOMWindowUtil.suppressEventHandling(). We then stop all page activity for each tab using browser.webNavigation.stop(nsIWebNavigation.STOP_ALL). We then clear the site-specific Zoom by temporarily disabling the preference browser.zoom.siteSpecific, and clear the GeoIP wifi token URL geo.wifi.access_token and the last opened URL prefs (if they exist). Each tab is then closed.

After closing all tabs, we then emit "browser:purge-session-history" (which instructs addons and various Firefox components to clear their session state), and then manually clear the following state: searchbox and findbox text, HTTP auth, SSL state, OCSP state, site-specific content preferences (including HSTS state), content and image cache, offline cache, Cookies, DOM storage, DOM local storage, the safe browsing key, and the Google wifi geolocation token (if it exists).

After the state is cleared, we then close all remaining HTTP keep-alive connections and then send the NEWNYM signal to the Tor control port to cause a new circuit to be created.

Finally, a fresh browser window is opened, and the current browser window is closed (this does not spawn a new Firefox process, only a new window).

If the user chose to "protect" any cookies by using the Torbutton Cookie Protections UI, those cookies are not cleared as part of the above.

In addition to the above mechanisms that are devoted to preserving privacy while browsing, we also have a number of technical mechanisms to address other privacy and security issues.

- Website Traffic Fingerprinting Defenses

Website Traffic Fingerprinting is a statistical attack to attempt to recognize specific encrypted website activity.

We want to deploy a mechanism that reduces the accuracy of useful features available for classification. This mechanism would either impact the true and false positive accuracy rates, or reduce the number of webpages that could be classified at a given accuracy rate.

Ideally, this mechanism would be as light-weight as possible, and would be tunable in terms of overhead. We suspect that it may even be possible to deploy a mechanism that reduces feature extraction resolution without any network overhead. In the no-overhead category, we have HTTPOS and better use of HTTP pipelining and/or SPDY. In the tunable/low-overhead category, we have Adaptive Padding and Congestion-Sensitive BUFLO. It may be also possible to tune such defenses such that they only use existing spare Guard bandwidth capacity in the Tor network, making them also effectively no-overhead.

Currently, we patch Firefox to randomize pipeline order and depth. Unfortunately, pipelining is very fragile. Many sites do not support it, and even sites that advertise support for pipelining may simply return error codes for successive requests, effectively forcing the browser into non-pipelined behavior. Firefox also has code to back off and reduce or eliminate the pipeline if this happens. These shortcomings and fallback behaviors are the primary reason that Google developed SPDY as opposed simply extending HTTP to improve pipelining. It turns out that we could actually deploy exit-side proxies that allow us to use SPDY from the client to the exit node. This would make our defense not only free, but one that actually improves performance.

Knowing this, we created this defense as an experimental research prototype to help evaluate what could be done in the best case with full server support. Unfortunately, the bias in favor of compelling attack papers has caused academia to ignore this request thus far, instead publishing only cursory (yet "devastating") evaluations that fail to provide even simple statistics such as the rates of actual pipeline utilization during their evaluations, in addition to the other shortcomings and shortcuts mentioned earlier. We can accept that our defense might fail to work as well as others (in fact we expect it), but unfortunately the very same shortcuts that provide excellent attack results also allow the conclusion that all defenses are broken forever. So sadly, we are still left in the dark on this point.

- Privacy-preserving update notification

In order to inform the user when their Tor Browser is out of date, we perform a privacy-preserving update check asynchronously in the background. The check uses Tor to download the file https://check.torproject.org/RecommendedTBBVersions and searches that version list for the current value for the local preference torbrowser.version. If the value from our preference is present in the recommended version list, the check is considered to have succeeded and the user is up to date. If not, it is considered to have failed and an update is needed. The check is triggered upon browser launch, new window, and new tab, but is rate limited so as to happen no more frequently than once every 1.5 hours.

If the check fails, we cache this fact, and update the Torbutton graphic to display a flashing warning icon and insert a menu option that provides a link to our download page. Additionally, we reset the value for the browser homepage to point to a page that informs the user that their browser is out of date.

The set of patches we have against Firefox can be found in the current-patches directory of the torbrowser git repository. They are:

- Block

Components.interfaces

In order to reduce fingerprinting, we block access to this interface from content script. Components.interfaces can be used for fingerprinting the platform, OS, and Firebox version, but not much else.

- Make

Permissions Manager memory only

This patch exposes a pref 'permissions.memory_only' that properly isolates the permissions manager to memory, which is responsible for all user specified site permissions, as well as stored HSTS policy from visited sites. The pref does successfully clear the permissions manager memory if toggled. It does not need to be set in prefs.js, and can be handled by Torbutton.

- Make

Intermediate Cert Store memory-only

The intermediate certificate store records the intermediate SSL certificates the browser has seen to date. Because these intermediate certificates are used by a limited number of domains (and in some cases, only a single domain), the intermediate certificate store can serve as a low-resolution record of browsing history.

Design Goal: As an additional design goal, we would like to later alter this patch to allow this information to be cleared from memory. The implementation does not currently allow this.

- Add

a string-based cacheKey property for domain isolation

To increase the security of cache isolation and to solve strange and unknown conflicts with OCSP, we had to patch Firefox to provide a cacheDomain cache attribute. We use the url bar FQDN as input to this field.

- Block

all plugins except flash

We cannot use the @mozilla.org/extensions/blocklist;1 service, because we actually want to stop plugins from ever entering the browser's process space and/or executing code (for example, AV plugins that collect statistics/analyze URLs, magical toolbars that phone home or "help" the user, Skype buttons that ruin our day, and censorship filters). Hence we rolled our own.

- Make content-prefs service memory only

This patch prevents random URLs from being inserted into content-prefs.sqlite in the profile directory as content prefs change (includes site-zoom and perhaps other site prefs?).

- Make Tor Browser exit when not launched from Vidalia

It turns out that on Windows 7 and later systems, the Taskbar attempts to automatically learn the most frequent apps used by the user, and it recognizes Tor Browser as a separate app from Vidalia. This can cause users to try to launch Tor Browser without Vidalia or a Tor instance running. Worse, the Tor Browser will automatically find their default Firefox profile, and properly connect directly without using Tor. This patch is a simple hack to cause Tor Browser to immediately exit in this case.

- Disable SSL Session ID tracking

This patch is a simple 1-line hack to prevent SSL connections from caching (and then later transmitting) their Session IDs. There was no preference to govern this behavior, so we had to hack it by altering the SSL new connection defaults.

- Provide an observer event to close persistent connections

This patch creates an observer event in the HTTP connection manager to close all keep-alive connections that still happen to be open. This event is emitted by the New Identity button.

- Limit Device and System Specific Media Queries

CSS Media Queries have a fingerprinting capability approaching that of Javascript. This patch causes such Media Queries to evaluate as if the device resolution was equal to the content window resolution.

- Limit the number of fonts per document

Font availability can be queried by CSS and Javascript and is a fingerprinting vector. This patch limits the number of times CSS and Javascript can cause font-family rules to evaluate. Remote @font-face fonts are exempt from the limits imposed by this patch, and remote fonts are given priority over local fonts whenever both appear in the same font-family rule. We do this by explicitly altering the nsRuleNode rule represenation itself to remove the local font families before the rule hits the font renderer.

- Rebrand Firefox to Tor Browser

This patch updates our branding in compliance with Mozilla's trademark policy.

- Make Download Manager Memory Only

This patch prevents disk leaks from the download manager. The original behavior is to write the download history to disk and then delete it, even if you disable download history from your Firefox preferences.

- Add DDG and StartPage to Omnibox

This patch adds DuckDuckGo and StartPage to the Search Box, and sets our default search engine to StartPage. We deployed this patch due to excessive Captchas and complete 403 bans from Google.

- Make nsICacheService.EvictEntries() Synchronous

This patch eliminates a race condition with "New Identity". Without it, cache-based Evercookies survive for up to a minute after clearing the cache on some platforms.

- Prevent WebSockets DNS Leak

This patch prevents a DNS leak when using WebSockets. It also prevents other similar types of DNS leaks.

- Randomize HTTP pipeline order and depth

As an experimental defense against Website Traffic Fingerprinting, we patch the standard HTTP pipelining code to randomize the number of requests in a pipeline, as well as their order.

- Emit

an observer event to filter the Drag and Drop URL list

This patch allows us to block external Drag and Drop events from Torbutton. We need to block Drag and Drop because Mac OS and Ubuntu both immediately load any URLs they find in your drag buffer before you even drop them (without using your browser's proxy settings, of course). This can lead to proxy bypass during user activity that is as basic as holding down the mouse button for slightly too long while clicking on an image link.

- Add mozIThirdPartyUtil.getFirstPartyURI() API

This patch provides an API that allows us to more easily isolate identifiers to the URL bar domain.

- Add canvas image extraction prompt

This patch prompts the user before returning canvas image data. Canvas image data can be used to create an extremely stable, high-entropy fingerprint based on the unique rendering behavior of video cards, OpenGL behavior, system fonts, and supporting library versions.

- Return client window coordinates for mouse events

This patch causes mouse events to return coordinates relative to the content window instead of the desktop.

- Do not expose physical screen info to window.screen

This patch causes window.screen to return the display resolution size of the content window instead of the desktop resolution size.

- Do not expose system colors to CSS or canvas

This patch prevents CSS and Javascript from discovering your desktop color scheme and/or theme.

- Isolate the Image Cache per url bar domain

This patch prevents cached images from being used to store third party tracking identifiers.

- nsIHTTPChannel.redirectTo() API

This patch provides HTTPS-Everywhere with an API to perform redirections more securely and without addon conflicts.

- Isolate DOM Storage to first party URI

This patch prevents DOM Storage from being used to store third party tracking identifiers.

- Remove

"This plugin is disabled" barrier

This patch removes a barrier that was informing users that plugins were disabled and providing them with a link to enable them. We felt this was poor user experience, especially since the barrier was displayed even for sites with dual Flash+HTML5 video players, such as YouTube.

A. Towards Transparency in Navigation Tracking

The privacy properties of Tor Browser are based upon the assumption that link-click navigation indicates user consent to tracking between the linking site and the destination site. While this definition is sufficient to allow us to eliminate cross-site third party tracking with only minimal site breakage, it is our long-term goal to further reduce cross-origin click navigation tracking to mechanisms that are detectable by attentive users, so they can alert the general public if cross-origin click navigation tracking is happening where it should not be.

In an ideal world, the mechanisms of tracking that can be employed during a link click would be limited to the contents of URL parameters and other properties that are fully visible to the user before they click. However, the entrenched nature of certain archaic web features make it impossible for us to achieve this transparency goal by ourselves without substantial site breakage. So, instead we maintain a Deprecation Wishlist of archaic web technologies that are currently being (ab)used to facilitate federated login and other legitimate click-driven cross-domain activity but that can one day be replaced with more privacy friendly, auditable alternatives.

Because the total elimination of side channels during cross-origin navigation will undoubtedly break federated login as well as destroy ad revenue, we also describe auditable alternatives and promising web draft standards that would preserve this functionality while still providing transparency when tracking is occurring.

- The Referer Header

We haven't disabled or restricted the Referer ourselves because of the non-trivial number of sites that rely on the Referer header to "authenticate" image requests and deep-link navigation on their sites. Furthermore, there seems to be no real privacy benefit to taking this action by itself in a vacuum, because many sites have begun encoding Referer URL information into GET parameters when they need it to cross http to https scheme transitions. Google's +1 buttons are the best example of this activity.

Because of the availability of these other explicit vectors, we believe the main risk of the Referer header is through inadvertent and/or covert data leakage. In fact, a great deal of personal data is inadvertently leaked to third parties through the source URL parameters.

We believe the Referer header should be made explicit. If a site wishes to transmit its URL to third party content elements during load or during link-click, it should have to specify this as a property of the associated HTML tag. With an explicit property, it would then be possible for the user agent to inform the user if they are about to click on a link that will transmit Referer information (perhaps through something as subtle as a different color in the lower toolbar for the destination URL). This same UI notification can also be used for links with the "ping" attribute.

- window.name

window.name is a DOM property that for some reason is allowed to retain a persistent value for the lifespan of a browser tab. It is possible to utilize this property for identifier storage during click navigation. This is sometimes used for additional XSRF protection and federated login.

It's our opinion that the contents of window.name should not be preserved for cross-origin navigation, but doing so may break federated login for some sites.

- Javascript link rewriting

In general, it should not be possible for onclick handlers to alter the navigation destination of 'a' tags, silently transform them into POST requests, or otherwise create situations where a user believes they are clicking on a link leading to one URL that ends up on another. This functionality is deceptive and is frequently a vector for malware and phishing attacks. Unfortunately, many legitimate sites also employ such transparent link rewriting, and blanket disabling this functionality ourselves will simply cause Tor Browser to fail to navigate properly on these sites.

Automated cross-origin redirects are one form of this behavior that is possible for us to address ourselves, as they are comparatively rare and can be handled with site permissions.

- Web-Send Introducer

Web-Send is a browser-based link sharing and federated login widget that is designed to operate without relying on third-party tracking or abusing other cross-origin link-click side channels. It has a compelling list of privacy and security features, especially if used as a "Like button" replacement.

- Mozilla Persona

Mozilla's Persona is designed to provide decentralized, cryptographically authenticated federated login in a way that does not expose the user to third party tracking or require browser redirects or side channels. While it does not directly provide the link sharing capabilities that Web-Send does, it is a better solution to the privacy issues associated with federated login than Web-Send is.